AI-native is not a feature. It's the difference between intelligence and autocomplete.

Why the distinction matters operationally

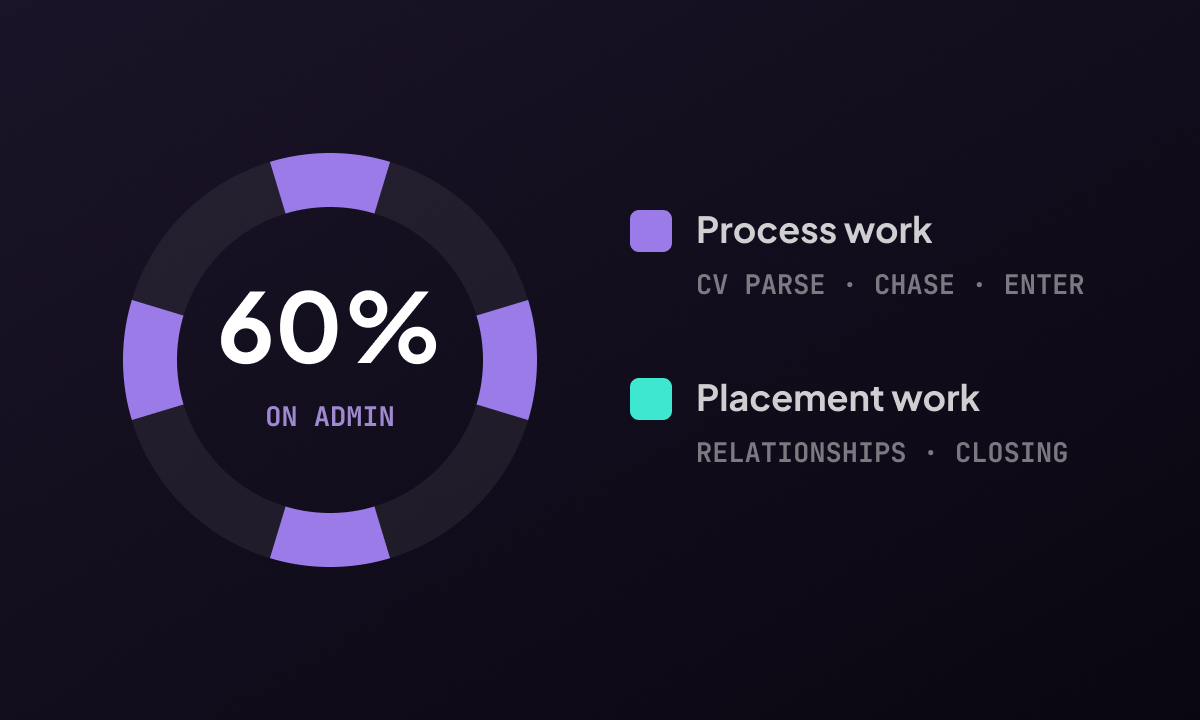

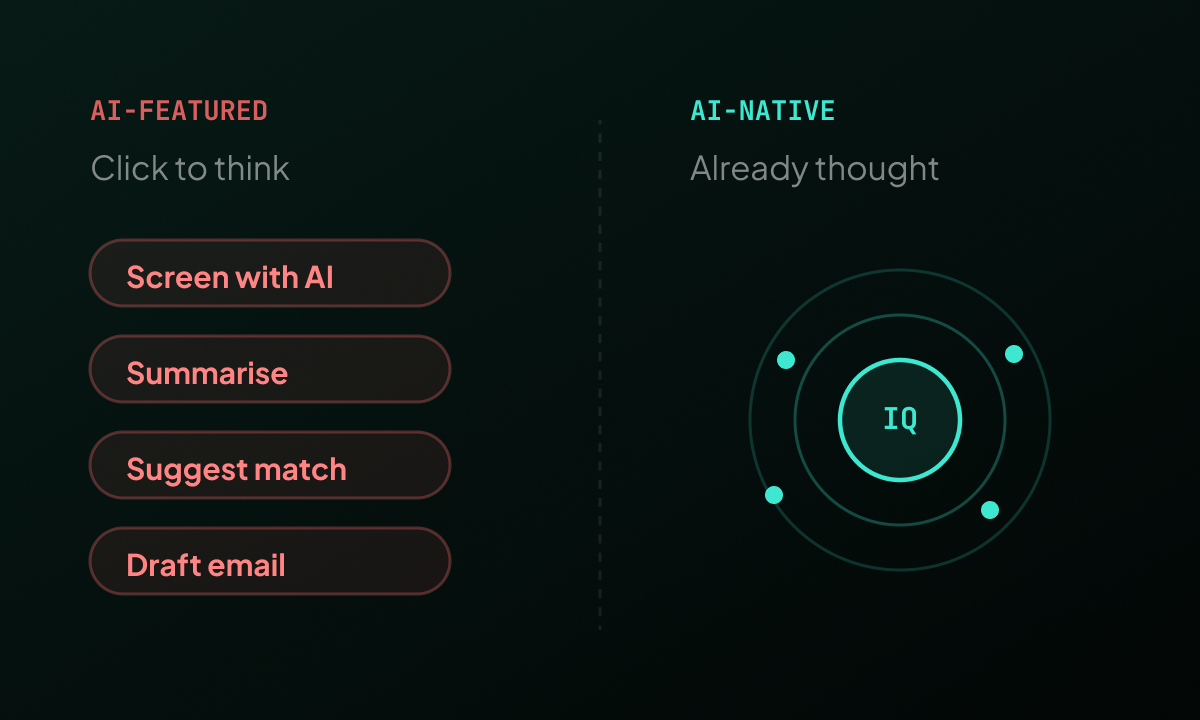

Consider what happens when a new candidate applies for a role in your database. In an AI-featured system, the AI processes that candidate when a recruiter clicks the screening button. Until then, the candidate sits in the pipeline unscored. The AI assessment depends on the recruiter being present to initiate it.

In an AI-native system, the candidate is processed when they arrive. The platform matches them against every active role in the pipeline, scores them against each, identifies if they are a stronger fit for a role other than the one they applied for, and surfaces that insight the next time a recruiter looks at either the candidate or the role. All of this happens without a recruiter present, often overnight, so that by the time anyone logs in, the intelligence is already there.

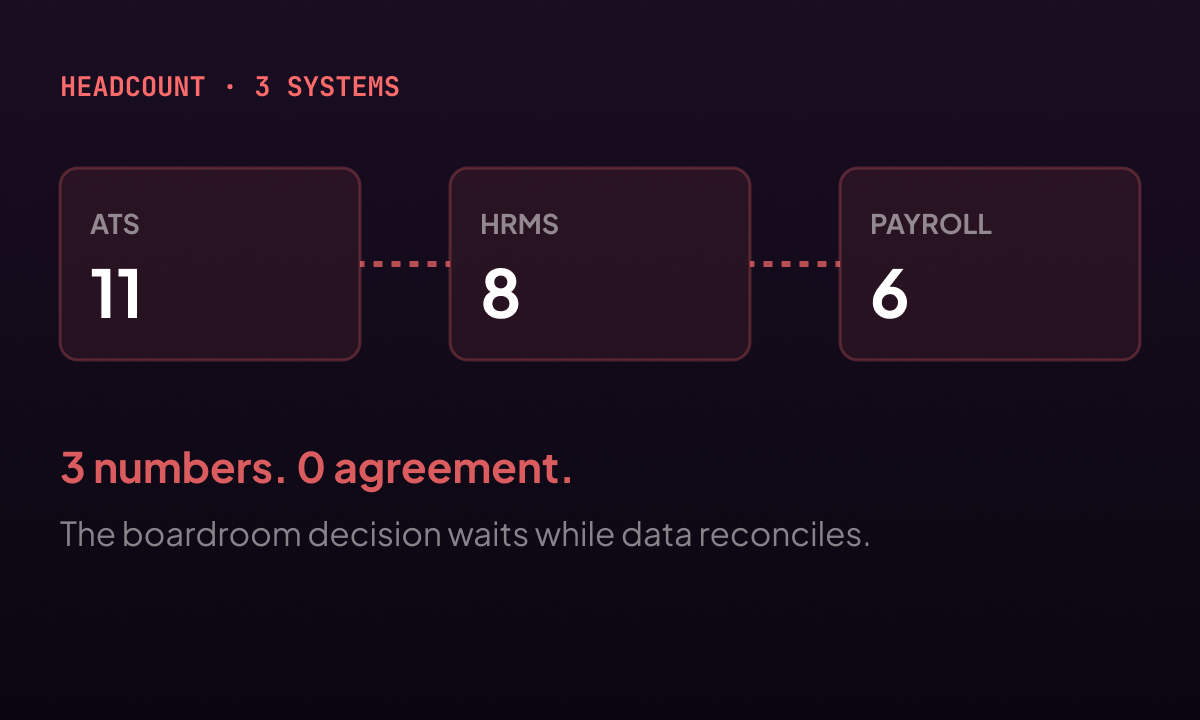

The data access problem

AI quality is determined by the data it can see. The more complete and current the data, the more accurate the intelligence.

An AI that was added as a feature layer to an existing platform typically has access to a subset of the data in that platform. It reads what it was integrated with. It does not, by default, have access to the commercial context of a client relationship, the historical outcomes of similar placements, or the compliance status of a candidate previous engagements. That information exists in the system. The AI layer does not reach it.

An AI built into the data model from the beginning has access to the full picture. Every field, every note, every outcome from every previous interaction is available as signal. The intelligence it produces is not just faster than a human making the same assessment. It is richer, because it is drawing on context that no individual recruiter could hold simultaneously.

The open-source dimension

There is a third dimension to the AI-native question that rarely appears in product comparisons: whether the AI model is open or closed.

Proprietary AI is a black box. The vendor can describe its capabilities. They cannot show you the model. You cannot verify what it was trained on, inspect whether it carries biased signal, or fine-tune it to perform better on your specific data. You are trusting the vendor's assessment of their own product.

Open-source AI is the opposite. The model is readable. It can be inspected, audited, deployed in your environment, and improved on your data. LinnkIQ is built on open-source AI. The choice was deliberate. It means the AI in the platform is transparent, auditable, and owned by the organisations that use it, not the vendor that built the platform.

The right question to ask in any evaluation

Ask any vendor to demonstrate the AI processing a candidate without a recruiter triggering it. Ask where the model runs. Ask whether the AI has access to commercial and historical data, or only to candidate profiles. The answers locate the platform precisely on the spectrum between AI-featured and AI-native.

"The AI that works while you sleep is worth more than the AI that works when you click. Only one of them changes what you find when you arrive."