Your AI is making hiring decisions. Can you explain any of them?

What explainable AI actually means

Explainable AI means that every decision an AI system makes can be traced back to a clear, readable reason. Not a probability score. Not a confidence rating. A reason. Which criteria matched. Which did not. What weighting was applied. What would change the outcome.

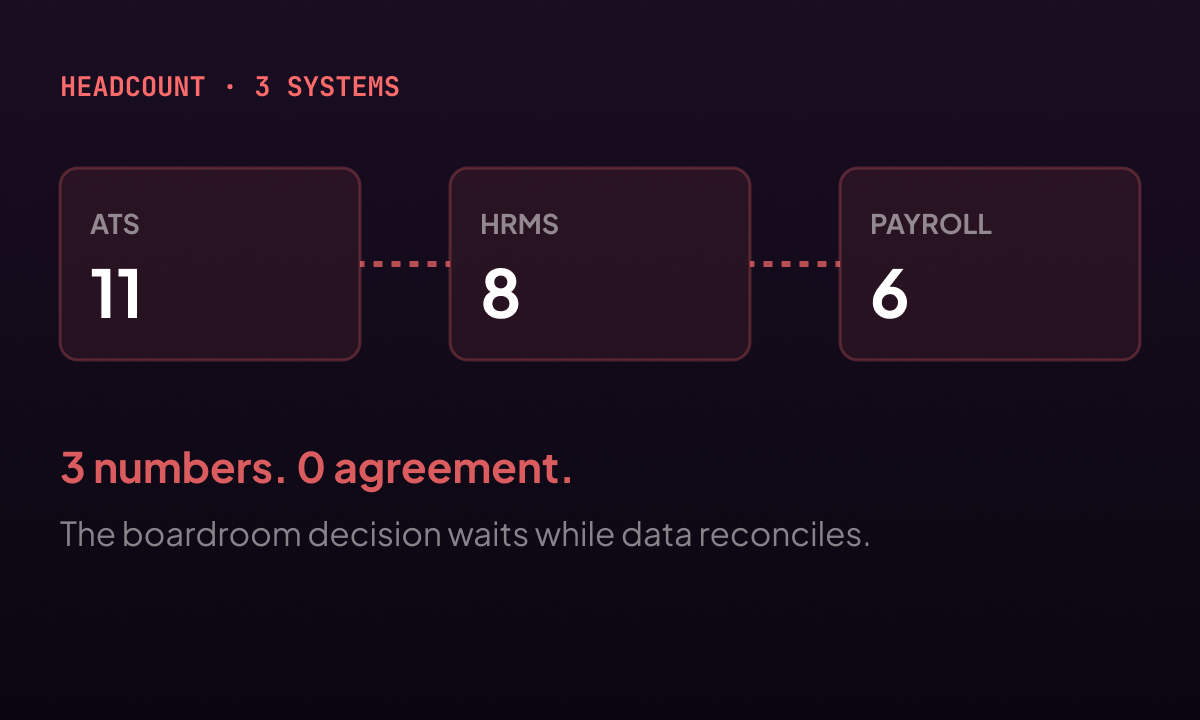

In a hiring context, explainability is the difference between an AI that says candidate score 74, and an AI that says the candidate matched on five of seven criteria, with strong alignment on financial analysis and stakeholder management, a gap on regulatory reporting experience, and a notice period within the required range. The first is an output. The second is a decision a recruiter can act on, explain to a client, and stand behind if questioned.

The distinction matters more today than it did two years ago. Regulators are moving in the same direction worldwide: if AI is involved in a hiring decision, the organisation deploying it needs to be able to explain how that decision was reached. Hiring AI is being classified as high-risk. Individual candidates now have the right to ask how an automated system assessed them. Employers who cannot answer that question are not just facing an awkward conversation. They are facing a compliance gap.

Why most platforms cannot do this today

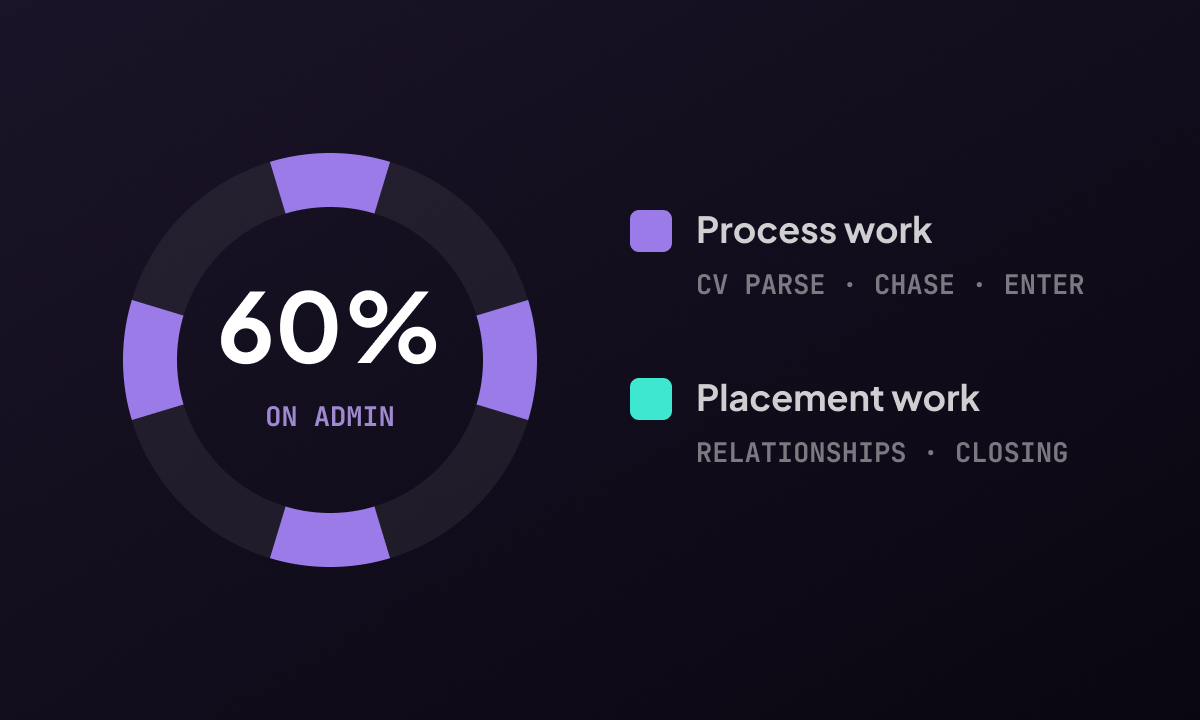

The honest answer is that most workforce AI was not designed with explainability in mind. It was designed with accuracy in mind. The two are not always compatible. The most statistically powerful models often produce outputs that are genuinely difficult to interpret, even by their developers.

A proprietary AI model trained on aggregated hiring data can tell you that a candidate is a strong match with high confidence. What it cannot always tell you is why. The signal might be in a pattern the model detected across thousands of historical hires. That pattern might not correspond to any criterion a human recruiter would recognise or endorse. And it might, without anyone intending it, reflect historical biases baked into the data the model learned from.

This is not a hypothetical. AI hiring tools have been found to downrank candidates based on factors that were proxies for protected characteristics. University attended. Gaps in employment. Types of previous employer. None of these are explicitly discriminatory. All of them can carry discriminatory signal when an AI learns from historical data that was itself shaped by bias.

What good looks like in practice

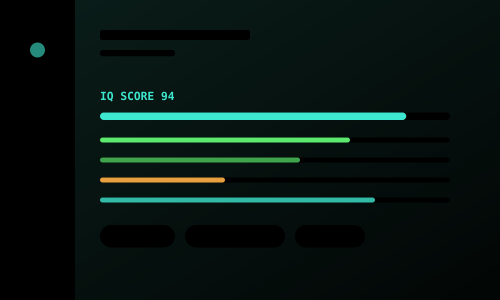

The organisations getting this right have built explainability into the AI at the level of architecture, not as a reporting feature added afterwards. Every scoring decision produces a reasoning chain at the moment it is made. The reasons are stored. They can be retrieved, reviewed, challenged, and used in a regulatory audit.

LinnkIQ's IQ Score works this way. When the platform scores a candidate against a role, the score includes a full breakdown: which criteria from the job qualification form were matched, which were not, what the candidate's stated experience covers, and where the gaps are. A recruiter reviewing a shortlist sees not just who scored highest but why. A client questioning a recommendation can be walked through the reasoning. A regulator asking for an audit trail gets one. That is not a feature the platform added. It is how the AI was built.

The question to bring to your next platform review

If you use AI in any part of your hiring or candidate management process, ask your vendor one question: can you show me the complete reasoning behind a specific AI decision, right now, from your live system?

Not a diagram of how the model generally works. Not a description of the scoring methodology. A live demonstration, with a real candidate record, showing exactly what data was considered, what the AI concluded, and why. The answer tells you everything you need to know about the architecture underneath the product.

"An AI that tells you a candidate scored 74 is not explaining a decision. It is producing a number. The two are not the same."