The hidden cost of AI that runs on someone else's servers

How proprietary AI pricing actually works

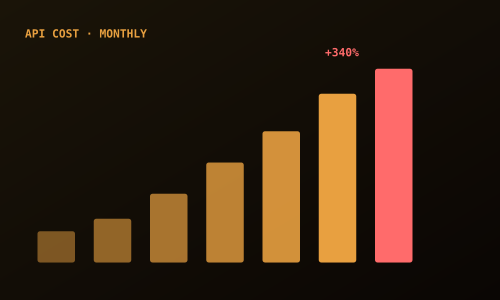

Most AI platforms that use large language models are paying per token for every inference call they make. A token is roughly three quarters of a word. A screening interaction that reads a candidate profile and generates a match score might consume several thousand tokens. Multiply that by hundreds of candidates per day across a busy agency, and the underlying compute cost is significant before the vendor adds their margin.

That cost gets passed through, usually as part of a tiered pricing model where the lower tiers look affordable and the tiers that match real operational volumes look very different. By the time an organisation reaches the volume where the AI is genuinely useful, they are in a pricing band that was not the one they evaluated during procurement.

The data question nobody asks at procurement

There is a second cost to running workforce AI on someone else's infrastructure, and it is harder to put a number on.

When a candidate's profile is processed by a proprietary AI model, that processing happens on the model provider's servers. The data travels from your system to theirs, the inference runs, and the result returns to you. Your candidate's name, employment history, qualifications, and personal details have passed through a third-party infrastructure chain at every step.

For organisations operating under modern data protection laws, this raises a straightforward question: where exactly is that data processed, and under whose policies? A compliance certification on a vendor's website is not the same as your candidate data staying within your jurisdiction.

"The monthly fee on the pricing page is not the cost of AI at scale. The real cost reveals itself gradually, usually at the point when switching has become genuinely difficult."